Next.js

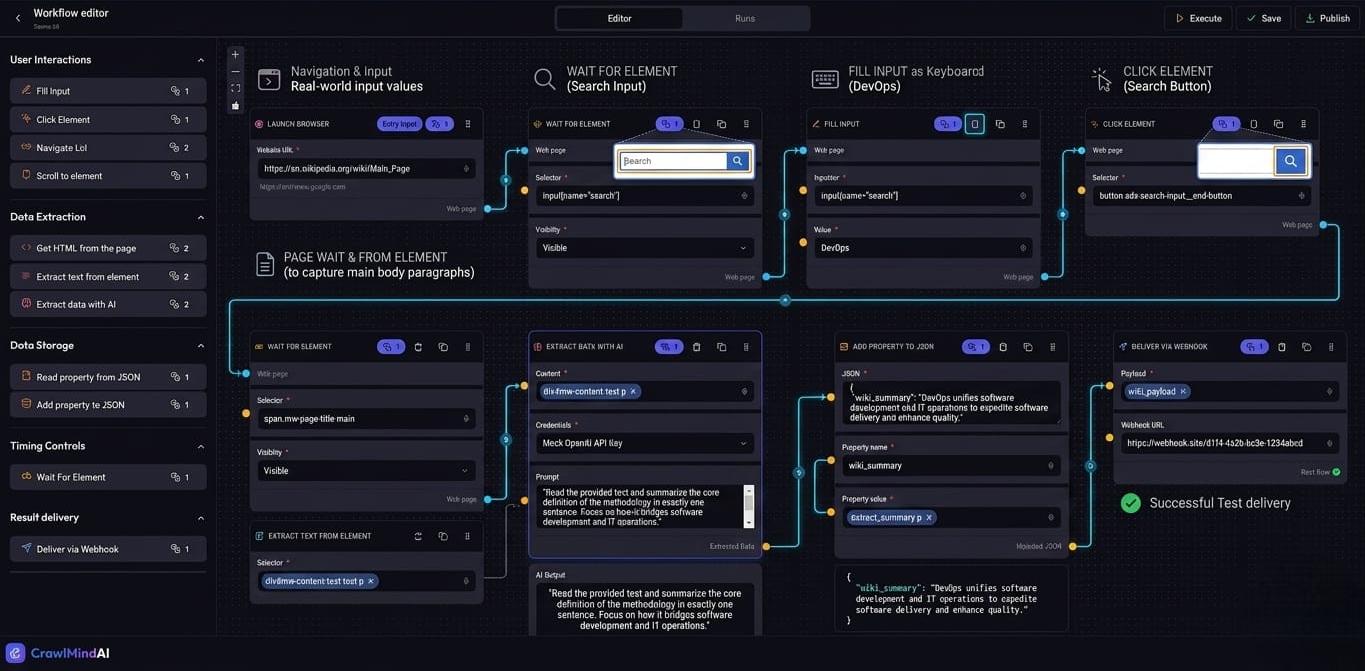

CrawlMindAI — Visual Web Automation & AI Extraction SaaS

0→Multiple distinct executorsno-code nodes

Traditional web scraping requires writing custom scripts, maintaining CSS/XPath selectors, and dealing with blockers. Scaling automation pipelines manually is error-prone, requiring developers for every new website scraping requirement.

// system visuals

See It In Action

LIVE_SYSTEM_PREVIEW

01/01

← → NAVIGATE_SYSTEM

// the problem

What Was Broken

- Building web scrapers typically requires custom Puppeteer or Python scripts

- Hardcoded selectors break frequently and require constant maintenance

- Non-technical users (marketers, growth hackers) depend on developers for data extraction

- Dynamic SPA (Single Page Application) scraping is complex to coordinate

// required fix

- Create a drag-and-drop visual workflow builder using React Flow

- Develop a robust background execution engine running headless Puppeteer

- Integrate LLMs (OpenAI gpt-4o-mini) for intelligent data parsing and structured extraction

- Implement credit-based billing (Stripe) and robust authentication (Clerk)

- Enable cron-based automated scheduling of scraping workflows

// solution

How It Was Built

Architected a full-stack Next.js application with a custom topological execution planner, passing browser session state dynamically across execution phases, and monetizing via granular phase execution tracking.

Visual Graph to Execution Plan

- Built an execution planner that parses the React Flow JSON graph definition, checking dependencies and creating a sequential topological sort (ExecutionPlan) of nodes to be executed as phases.

- 📄 lib/workflow/executionPlan.ts

Dynamic Execution Engine & Browser State

- Developed an execution engine that iterates through phases.

- 📄 lib/workflow/executeWorkflow.ts

AI Data Extraction Node

- Implemented a specialized executor that passes raw HTML text to gpt-4o-mini, using strict system prompts to extract structured JSON arrays effortlessly, securely retrieving the user's symmetrically encrypted API key from the DB.

- 📄 lib/workflow/executor/ExtractDataWithAiExecutor.ts

Visual Graph to Execution Plan

Built an execution planner that parses the React Flow JSON graph definition, checking dependencies and creating a sequential topological sort (ExecutionPlan) of nodes to be executed as phases.

lib/workflow/executionPlan.ts

typescript

// Resolving inputs & grouping nodes into sequential phases

const phases = [];

// topological sort logic...

return ExecutionPlan(phases);Dynamic Execution Engine & Browser State

Developed an execution engine that iterates through phases. It creates a shared Environment holding the Puppeteer Browser and Page instances, passing state seamlessly between visual nodes (e.g. Login -> Wait -> Scrape).

lib/workflow/executeWorkflow.ts

typescript

const environment = { browser: await puppeteer.launch(), page: await browser.newPage() };

for (const phase of executionPlan.phases) {

await resolveInputs(phase);

await ExecutorRegistry[phase.nodeType](environment, inputs);

}AI Data Extraction Node

Implemented a specialized executor that passes raw HTML text to gpt-4o-mini, using strict system prompts to extract structured JSON arrays effortlessly, securely retrieving the user's symmetrically encrypted API key from the DB.

lib/workflow/executor/ExtractDataWithAiExecutor.ts

typescript

const apiKey = symmetricDecrypt(credential.value);

const response = await openai.chat.completions.create({

model: "gpt-4o-mini",

messages: [{ role: "system", content: "Return pure JSON array." }, { role: "user", content: rawHtml }]

});

return parseOutput(response.choices[0].message.content);// results

What Changed

Successfully launched a robust web automation SaaS platform capable of democratizing data extraction through visual programming and AI.

No-Code Nodes

0

→

0

Visual flow building

AI Extraction

Regex/CSS selectors

→

0

Resilient scraping

Execution Environment

Local scripts

→

0

Automated pipelines

Monetization

None

→

0

Granular Stripe credits

"Democratized web scraping, enabling non-technical users to build complex pipelines. Managed heavy background compute loads while maintaining precise cost accounting per phase."