AI/ML

AI Portfolio Intelligence Engine

0 (static page)→143 (semantic)knowledge chunks indexed

Static portfolios often lack the ability to dynamically surface relevant technical details based on specific queries. I needed a system that could intelligently parse my experience and provide instant, contextually relevant answers without manual navigation.

// system visuals

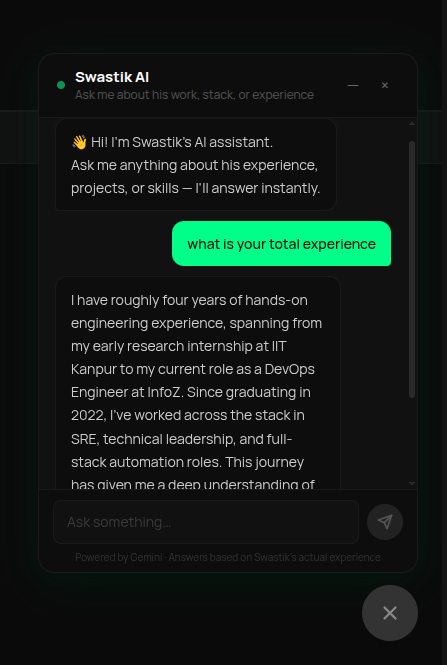

See It In Action

LIVE_SYSTEM_PREVIEW

01/01

← → NAVIGATE_SYSTEM

// the problem

What Was Broken

- Traditional static sites make it difficult to quickly find specific technical implementation details

- Lack of dynamic surfacing of relevant project details based on specific user queries

- Gemini free-tier rate limits: 15 RPM — insufficient for sustained heavy traffic

- IPv6 DNS resolution on local ISPs causes random API timeouts in Node.js

// required fix

- Ingest 143 portfolio knowledge chunks with 768-dim Gemini embeddings into Supabase pgvector

- Build a semantic retrieval pipeline returning top-5 relevant chunks per query

- Implement Gemini 2.5 Flash as primary AI with OpenRouter Gemma 3 12B as automatic fallback

- Ensure 0% UI downtime — all errors must surface as chat messages, never broken UI

- Bypass IPv6 DNS timeouts using IPv4-forced HTTPS connections

// solution

How It Was Built

Built the full RAG pipeline solo: ingestion script, pgvector search, Gemini primary + OpenRouter fallback orchestration, and IPv4-forced HTTPS for network reliability. All in one sprint.

Ingestion Pipeline: 143 Chunks → pgvector

- Built build-chunks.

- 📄 scripts/build-chunks.ts

Semantic Search: pgvector Cosine Similarity

- Each user query is embedded and matched against all chunks via pgvector's <=> cosine distance operator.

- 📄 supabase/functions/match_chunks.sql

Triple-Redundancy AI Failover

- The /api/chat route tries Gemini first (3 retries on 503).

- 📄 src/app/api/chat/route.ts

Ingestion Pipeline: 143 Chunks → pgvector

Built build-chunks.ts to split portfolio JSON into semantic chunks, embed via Gemini text-embedding-004 (768 dims), and upsert into Supabase pgvector. 1500ms batch delays respect the free-tier 15 RPM limit. All 143 chunks indexed cleanly.

scripts/build-chunks.ts

typescript

const embedding = await ai.models.embedContent({

model: 'text-embedding-004',

contents: [{ parts: [{ text: chunk.content }] }],

});

await supabase.from('portfolio_chunks').upsert({

id: chunk.id,

content: chunk.content,

embedding: embedding.embeddings[0].values, // 768 dims

metadata: chunk.metadata,

});

await new Promise(r => setTimeout(r, 1500)); // respect 15 RPMSemantic Search: pgvector Cosine Similarity

Each user query is embedded and matched against all chunks via pgvector's <=> cosine distance operator. Top-5 results are injected as context into the AI prompt — giving the model grounded, factual answers with zero hallucination.

supabase/functions/match_chunks.sql

sql

SELECT content, metadata,

1 - (embedding <=> query_embedding) AS similarity

FROM portfolio_chunks

ORDER BY similarity DESC

LIMIT 5;Triple-Redundancy AI Failover

The /api/chat route tries Gemini first (3 retries on 503). On 429 quota hit, it silently switches to OpenRouter Gemma 3 12B. If OpenRouter also fails, it returns a polite chat message — never a broken UI. IPv4-forced HTTPS bypasses ISP DNS timeouts entirely.

src/app/api/chat/route.ts

typescript

try {

reply = await geminiPost(apiKey, body); // primary

} catch (err) {

if (err.statusCode === 429) {

// Fallback: OpenRouter Gemma 3 12B (free tier)

reply = await openRouterPost(openRouterKey, body);

} else throw err;

}

// Outer catch: return graceful UI message, never 500// results

What Changed

143 chunks indexed. Chatbot answers complex technical questions in <2s with factual, grounded context. Zero UI downtime across all failure scenarios. Built solo in one sprint at effectively $0 cost.

Knowledge chunks indexed

0 (static page)

→

0

Full portfolio RAG

Semantic search latency

N/A

→

0

Real-time retrieval

AI failover tiers

1 (single point of failure)

→

0

0% downtime

Monthly infra cost

N/A

→

0

Free tier engineering

"143 semantic knowledge chunks indexed from real project data. Sub-200ms retrieval with triple-redundancy failover — zero downtime across all tested failure scenarios, built solo at effectively $0 cost."